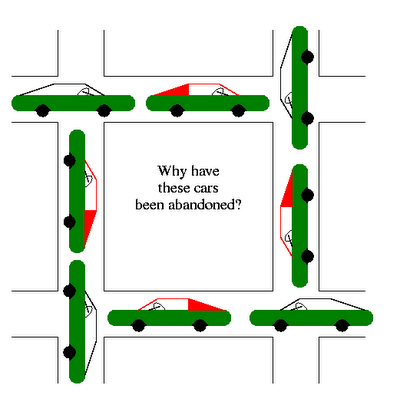

Pessimistic Locking would kick in at the database level when invoking either lock! or the with_lock - which wraps inside a transaction. Logger.error "Changes to this record: # were made since you started editing. This means it is the application responsibility to handle this error: If when the update is attempted the Order object’s lock_version does not match the current object’s lock_version then the error gets raised. While transactions are running, postgres will lock rows, which under certain scenarios leads to deadlock. Postgres can get into this state if two transactions concurrently modify a table. This works because internally lock_version gets incremented when a the process grabs the record. Postgres is telling us that process 1 is blocked by process 2 and process 2 is blocked by process 1. Now a ActiveRecord::StaleObjectError error will be raise if the attempt is made to modify the same object at the same time. I have a partitioned table named logs with a lot of INSERT. If ActiveRecord::_exists? :orders, :lock_version Postgresql Postgres: deadlock for CREATE PARTITION with INSERT. Unless ActiveRecord::_exists? :orders, :lock_versionĪdd_column :orders, :lock_version, :integer The first step is to create a migration to modifiy your Model to have a designated lock column:Ĭlass AddLockVersionToOrder < ActiveRecord::Migration The Optimistic part means that we can service as many users as possible, since we will allow reading to all and then implement a locking mechanism when we attempt an update. Optimistic Locking is implemented by Rails where Pessimistic Locking is implemented at the database level. If there was a high likelikehood of reoccurrence we would use Pessimistic Locking. if there is not a high likelihood this will happen again we would use Optomistic Locking. At least does not happen with the same results. Now the immediate concern is mitigated it is time to change our code so this does not happen again. Subtract those and you can easily see the waiting process.Īll that remains is to kill the process id: You can see the infrastructure processes associated with Postgres. The immediate need is to resolve this situation so the users do not see a stream of 500 errors.įirst stop identify the postgresql process. The scenario where an object A instance is waiting on a specific record mapped to object B at the same time the object B record awaits on object A. Has_many :assets, as: :attachable, :dependent => :destroy Has_many :assigned_users, as: :assignable, dependent: :destroy Postgres deadlock messages related to TrafficAnalysis and SourceServices have been observed in some.

Has_many :approvals, as: :approvable, :dependent => :destroy Has_one :commission_split, :dependent => :destroy Has_one :contact, as: :contactable, :dependent => :destroy

Such an update potentially cause resources to be in demand from more than one source. Deadlocks in PostgreSQL Published on NovemIn concurrent systems where resources are locked, two or more processes can end up in a state in which each process is waiting for the other one. The solution will always involve changing queries, and will sometimes involve changing schema.Within the confines of the application trends in usage dictate whether synchronous data update is likely to occur. Research exclusive locks, shared locks, gap locks, and index locks. To solve deadlocks, you must understand when and where locks are acquired in your DBMS. Check which locks are active, and the application code that issues those queries. (Exactly which transaction will be aborted is difficult to predict and should not be relied upon. The key to diagnosing deadlocks is understanding what is causing the deadlocks. PostgreSQL automatically detects deadlock situations and resolves them by aborting one of the transactions involved, allowing the other (s) to complete. This is the amount of time to wait on a lock before checking to see if there is a deadlock condition. To view the active locks in postgres, query the pg_locks view:ĭeadlocks can also be logged by enabling the log_lock_waits setting. The Percona Database Performance Blog has a very detailed guide for troubleshooting deadlocks here. In MySQL 5.6.2+ you can enable the innodb_print_all_deadlocks variable to have all deadlocks logged to the mysqld error log. You can use the SHOW ENGINE INNODB STATUS command to show the latest deadlock in MySQL.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed